TR2026-052

LLMPhy: Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines

-

- , "LLMPhy: Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines", International Conference on Artificial Intelligence and Statistics (AISTATS), May 2026.BibTeX TR2026-052 PDF Data Software

- @inproceedings{Cherian2026may,

- author = {Cherian, Anoop and Corcodel, Radu and Jain, Siddarth and Romeres, Diego},

- title = {{LLMPhy: Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines}},

- booktitle = {International Conference on Artificial Intelligence and Statistics (AISTATS)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-052}

- }

- , "LLMPhy: Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines", International Conference on Artificial Intelligence and Statistics (AISTATS), May 2026.

-

MERL Contacts:

-

Research Areas:

Artificial Intelligence, Computer Vision, Machine Learning, Multi-Physical Modeling

Abstract:

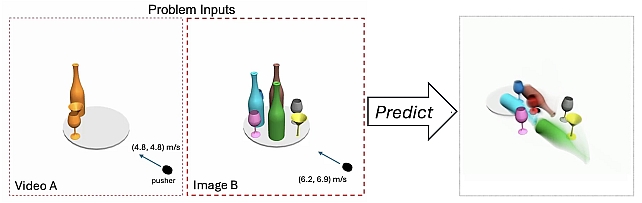

Most learning-based approaches to com- plex physical reasoning sidestep the crucial problem of parameter identification (e.g., mass, friction) that governs scene dynam- ics—despite its importance in real-world appli- cations such as collision avoidance and robotic manipulation. In this paper, we present LLMPhy, a black-box optimization frame- work that integrates large language models (LLMs) with physics simulators for physical reasoning. The core insight of LLMPhy is to bridge the textbook physical knowledge embedded in LLMs with the world models implemented in modern physics engines, en- abling the construction of digital twins of in- put scenes via latent parameter estimation. Specifically, LLMPhy decomposes digital twin construction into two subproblems: (i) a con- tinuous problem of estimating physical pa- rameters and (ii) a discrete problem of esti- mating scene layout. For each subproblem, LLMPhy iteratively prompts the LLM to gen- erate computer programs encoding parameter estimates, executes them in the physics engine to reconstruct the scene, and uses the result- ing reconstruction error as feedback to refine the LLM’s predictions. As existing physi- cal reasoning benchmarks rarely account for parameter identifiability, we introduce three new datasets designed to evaluate physical reasoning in zero-shot settings. Our results show that LLMPhy achieves state-of-the-art performance on our tasks, recovers physical parameters more accurately, and converges more reliably than prior black-box methods.